Cookbook: OpenAI Integration (Python)

This is a cookbook with examples of the Langfuse Integration for OpenAI (Python).

Follow the integration guide to add this integration to your OpenAI project.

Setup

The integration is compatible with OpenAI SDK versions >=0.27.8. It supports async functions and streaming for OpenAI SDK versions >=1.0.0.

%pip install langfuse openai --upgradeimport os

# get keys for your project from https://cloud.langfuse.com

os.environ["LANGFUSE_PUBLIC_KEY"] = ""

os.environ["LANGFUSE_SECRET_KEY"] = ""

# your openai key

os.environ["OPENAI_API_KEY"] = ""

# Your host, defaults to https://cloud.langfuse.com

# For US data region, set to "https://us.cloud.langfuse.com"

# os.environ["LANGFUSE_HOST"] = "http://localhost:3000"# instead of: import openai

from langfuse.openai import openai# For debugging, checks the SDK connection with the server. Do not use in production as it adds latency.

openai.langfuse_auth_check()Examples

Chat completion (text)

completion = openai.chat.completions.create(

name="test-chat",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a very accurate calculator. You output only the result of the calculation."},

{"role": "user", "content": "1 + 1 = "}],

temperature=0,

metadata={"someMetadataKey": "someValue"},

)Chat completion (image)

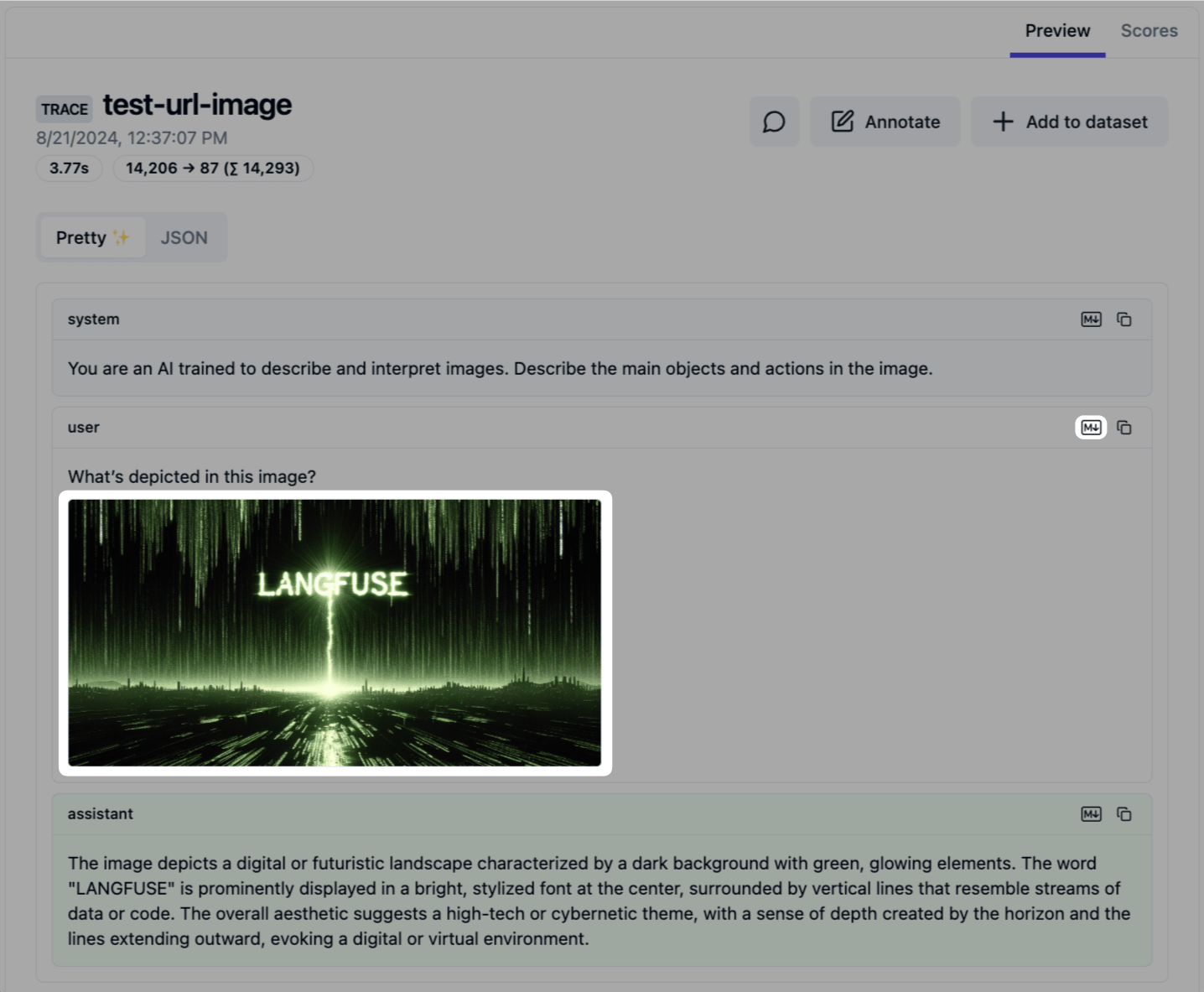

Simple example using the OpenAI vision’s functionality. Images may be passed in the user messages.

Langfuse supports images passed via link. No support for base64 encoded images yet, see roadmap for details.

completion = openai.chat.completions.create(

name="test-url-image",

model="gpt-4o-mini", # GPT-4o, GPT-4o mini, and GPT-4 Turbo have vision capabilities

messages=[

{"role": "system", "content": "You are an AI trained to describe and interpret images. Describe the main objects and actions in the image."},

{"role": "user", "content": [

{"type": "text", "text": "What’s depicted in this image?"},

{

"type": "image_url",

"image_url": {

"url": "https://static.langfuse.com/langfuse-dev/langfuse-example-image.jpeg",

},

},

],

}

],

temperature=0,

metadata={"someMetadataKey": "someValue"},

)Go to https://cloud.langfuse.com or your own instance to see your generation.

Chat completion (streaming)

Simple example using the OpenAI streaming functionality.

completion = openai.chat.completions.create(

name="test-chat",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a professional comedian."},

{"role": "user", "content": "Tell me a joke."}],

temperature=0,

metadata={"someMetadataKey": "someValue"},

stream=True

)

for chunk in completion:

print(chunk.choices[0].delta.content, end="")Chat completion (async)

Simple example using the OpenAI async client. It takes the Langfuse configurations either from the environment variables or from the attributes on the openai module.

from langfuse.openai import AsyncOpenAI

async_client = AsyncOpenAI()completion = await async_client.chat.completions.create(

name="test-chat",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a very accurate calculator. You output only the result of the calculation."},

{"role": "user", "content": "1 + 100 = "}],

temperature=0,

metadata={"someMetadataKey": "someValue"},

)Go to https://cloud.langfuse.com or your own instance to see your generation.

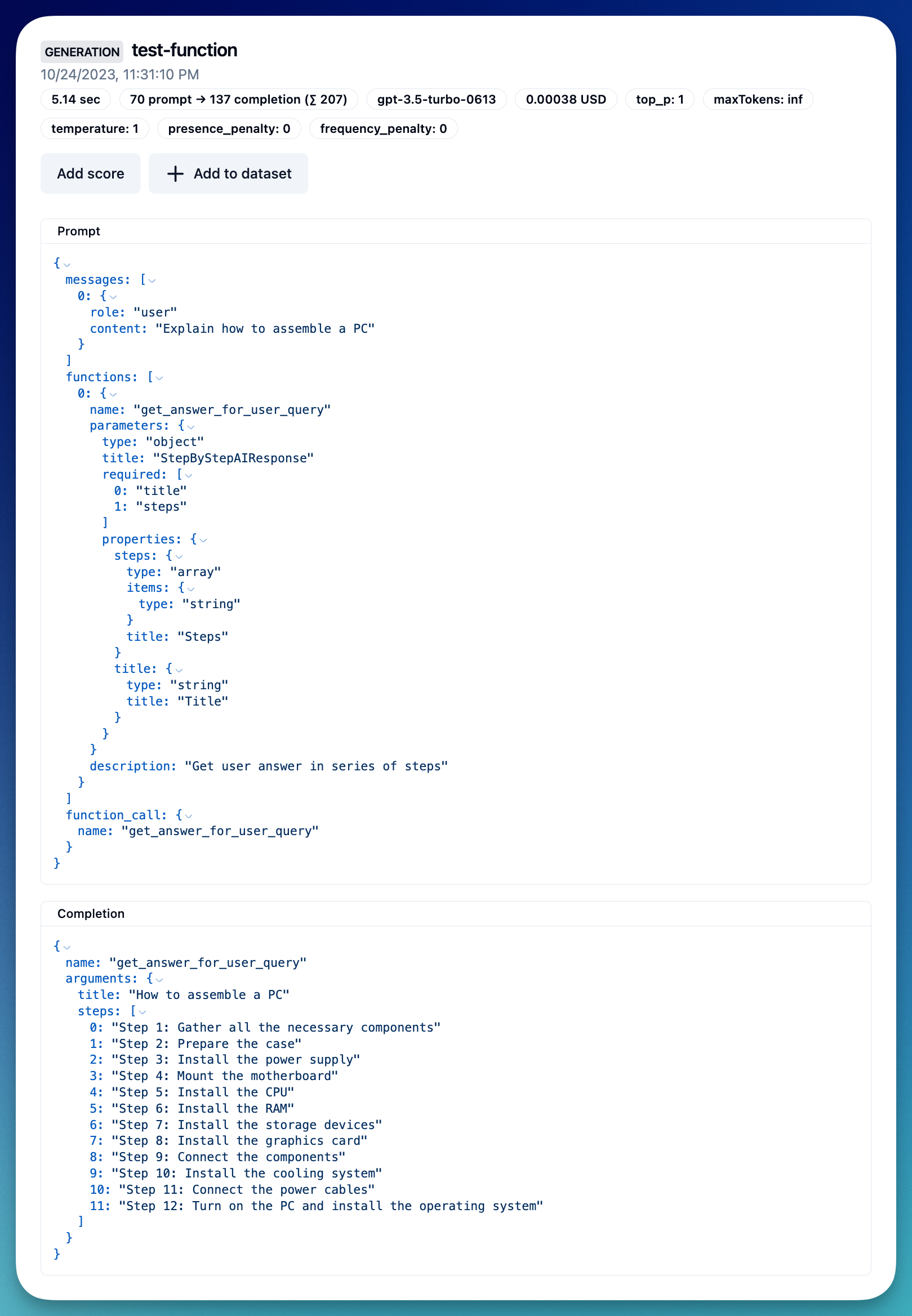

Functions

Simple example using Pydantic to generate the function schema.

%pip install pydantic --upgradefrom typing import List

from pydantic import BaseModel

class StepByStepAIResponse(BaseModel):

title: str

steps: List[str]

schema = StepByStepAIResponse.schema() # returns a dict like JSON schemaimport json

response = openai.chat.completions.create(

name="test-function",

model="gpt-3.5-turbo-0613",

messages=[

{"role": "user", "content": "Explain how to assemble a PC"}

],

functions=[

{

"name": "get_answer_for_user_query",

"description": "Get user answer in series of steps",

"parameters": StepByStepAIResponse.schema()

}

],

function_call={"name": "get_answer_for_user_query"}

)

output = json.loads(response.choices[0].message.function_call.arguments)Go to https://cloud.langfuse.com or your own instance to see your generation.

AzureOpenAI

The integration also works with the AzureOpenAI and AsyncAzureOpenAI classes.

AZURE_OPENAI_KEY=""

AZURE_ENDPOINT=""

AZURE_DEPLOYMENT_NAME="cookbook-gpt-4o-mini" # example deployment name# instead of: from openai import AzureOpenAI

from langfuse.openai import AzureOpenAIclient = AzureOpenAI(

api_key=AZURE_OPENAI_KEY,

api_version="2023-03-15-preview",

azure_endpoint=AZURE_ENDPOINT

)client.chat.completions.create(

name="test-chat-azure-openai",

model=AZURE_DEPLOYMENT_NAME, # deployment name

messages=[

{"role": "system", "content": "You are a very accurate calculator. You output only the result of the calculation."},

{"role": "user", "content": "1 + 1 = "}],

temperature=0,

metadata={"someMetadataKey": "someValue"},

)Example trace: https://cloud.langfuse.com/project/cloramnkj0002jz088vzn1ja4/traces/7ceb3ee3-0f2a-4f36-ad11-87ff636efd1e

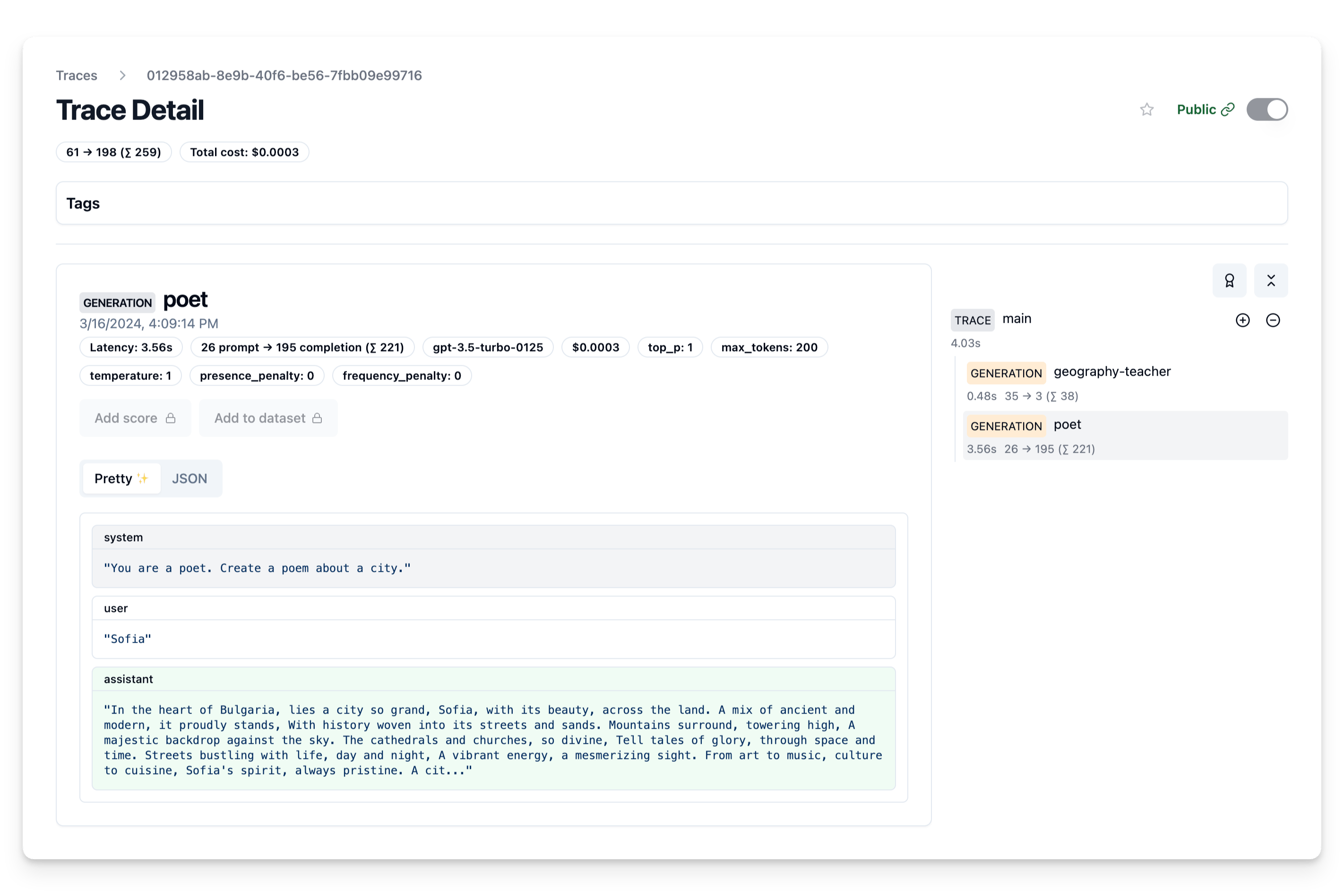

Group multiple generations into a single trace

Many applications require more than one OpenAI call. The @observe() decorator allows to nest all LLM calls of a single API invocation into the same trace in Langfuse.

from langfuse.openai import openai

from langfuse.decorators import observe

@observe() # decorator to automatically create trace and nest generations

def main(country: str, user_id: str, **kwargs) -> str:

# nested generation 1: use openai to get capital of country

capital = openai.chat.completions.create(

name="geography-teacher",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a Geography teacher helping students learn the capitals of countries. Output only the capital when being asked."},

{"role": "user", "content": country}],

temperature=0,

).choices[0].message.content

# nested generation 2: use openai to write poem on capital

poem = openai.chat.completions.create(

name="poet",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a poet. Create a poem about a city."},

{"role": "user", "content": capital}],

temperature=1,

max_tokens=200,

).choices[0].message.content

return poem

# run main function and let Langfuse decorator do the rest

print(main("Bulgaria", "admin"))Go to https://cloud.langfuse.com or your own instance to see your trace.

Fully featured: Interoperability with Langfuse SDK

The trace is a core object in Langfuse and you can add rich metadata to it. See Python SDK docs for full documentation on this.

Some of the functionality enabled by custom traces:

- custom name to identify a specific trace-type

- user-level tracking

- experiment tracking via versions and releases

- custom metadata

from langfuse.openai import openai

from langfuse.decorators import langfuse_context, observe

@observe() # decorator to automatically create trace and nest generations

def main(country: str, user_id: str, **kwargs) -> str:

# nested generation 1: use openai to get capital of country

capital = openai.chat.completions.create(

name="geography-teacher",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a Geography teacher helping students learn the capitals of countries. Output only the capital when being asked."},

{"role": "user", "content": country}],

temperature=0,

).choices[0].message.content

# nested generation 2: use openai to write poem on capital

poem = openai.chat.completions.create(

name="poet",

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a poet. Create a poem about a city."},

{"role": "user", "content": capital}],

temperature=1,

max_tokens=200,

).choices[0].message.content

# rename trace and set attributes (e.g., medatata) as needed

langfuse_context.update_current_trace(

name="City poem generator",

session_id="1234",

user_id=user_id,

tags=["tag1", "tag2"],

public=True,

metadata = {

"env": "development",

},

release = "v0.0.21"

)

return poem

# create random trace_id, could also use existing id from your application, e.g. conversation id

trace_id = str(uuid4())

# run main function, set your own id, and let Langfuse decorator do the rest

print(main("Bulgaria", "admin", langfuse_observation_id=trace_id))Programmatically add scores

You can add scores to the trace, to e.g. record user feedback or some programmatic evaluation. Scores are used throughout Langfuse to filter traces and on the dashboard. See the docs on scores for more details.

The score is associated to the trace using the trace_id.

from langfuse import Langfuse

from langfuse.decorators import langfuse_context, observe

langfuse = Langfuse()

@observe() # decorator to automatically create trace and nest generations

def main():

# get trace_id of current trace

trace_id = langfuse_context.get_current_trace_id()

# rest of your application ...

return "res", trace_id

# execute the main function to generate a trace

_, trace_id = main()

# Score the trace from outside the trace context

langfuse.score(

trace_id=trace_id,

name="my-score-name",

value=1

)